|

|

Review: Samsung 840 250GB SSD |

It was just a few weeks ago, that I took a look at Samsung's

flagship consumer grade SSD, the 840 Pro. In this article I'm taking a look at the

first SSD in the world to use TLC NAND, the standard 840 series SSD. TLC NAND

is able to store 3 bits of data per cell. This differs from MLC NAND in that

MLC NAND can only store 2 bits of data per cell. The obvious attraction for

using TLC NAND is down to cost.

The question must be, how does TLC NAND compare to MLC with

regard to performance, and durability? Samsung are very confident in the durability

of their own TLC NAND, and also confident that performance will still be top

drawer.

Whilst I can easily show you how this SSD delivers performance

wise in this review, durability is another matter. The testing period for a

review sample is only a couple of weeks, and clearly this is not enough time to

make any meaningful predictions about TLC NAND durability. Fear not though,

after this article is published, I will start a long-term testing phase on the

Samsung 840 series SSD, and will publish quarterly reports on how well its TLC

NAND is coping with a normal consumer PC workload.

The review sample that Samsung sent was the 250GB version,

so let's find out how the Samsung 840 performs in our range of tests.

Samsung company information

Samsung should need no introduction, but those of you who

would like to find out more about Samsung, can do so at their website.

The Samsung 840 - 250GB SSD

Now it’s time to take a look at the drive itself and what it

came shipped with.

Packaging

The SSD I received was a retail unit, so let's first start

with the packaging.

Box front

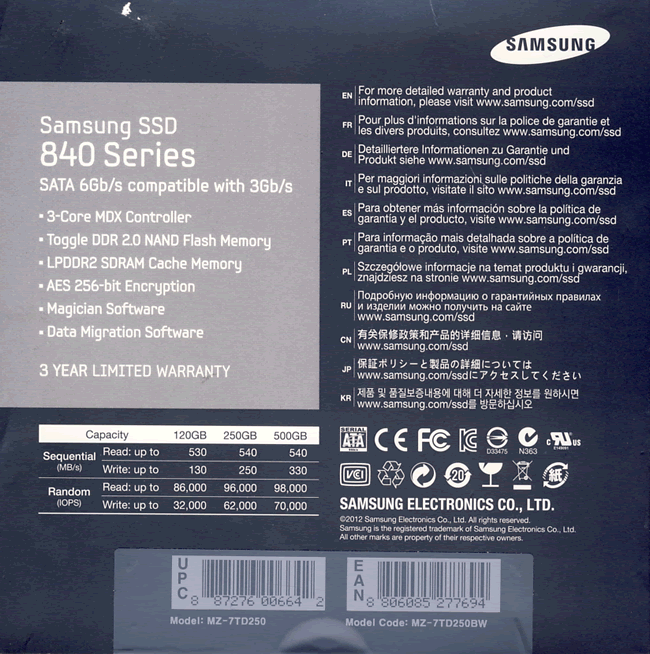

Box rear

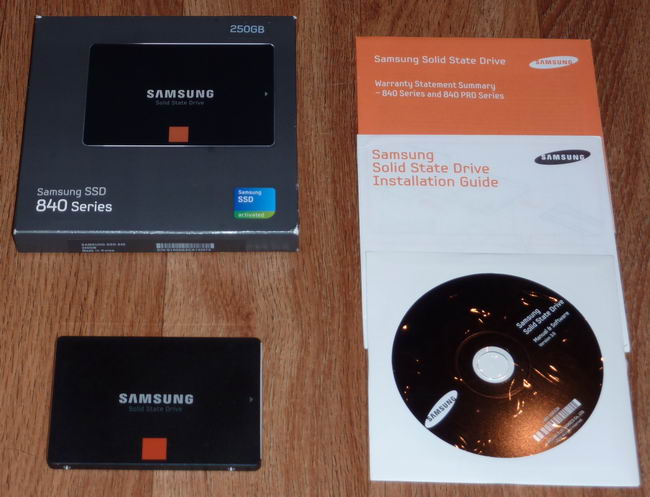

Inside the box

The complete package

The Samsung 840 package contained the Samsung 840 250GB SSD

itself, quick installation booklet, warranty information, and software support

DVD-ROM.

Drive top

The top of the drive is made of plastic with metal shielding.

Drive bottom

On the underside of the SSD, I found a label which displays

the SSD model number, storage capacity, and indicates that the SSD was

manufactured in Korea.

The case itself is 7mm thick and designed to be housed in a

standard 2.5 inch drive bay, or a 3.5 inch drive bay using a 3.5 inch to 2.5

inch converter bracket.

Now let's head to the next page, where we look in more

detail at the Samsung 840 SSD.....