|

|

Review: Toshiba ADVERTISEMENT

|

Despite how far SSDs have come, where even

the most basic SSD beats every hard disk on the market on performance, hard

disks do still currently have one significant advantage in that they are much more

affordable for storing data. In this review, we will take a look at Toshiba's

latest 1TB 2.5" 9.5mm HDD, which features two platters that spin at

5400RPM. Many competing 1TB 2.5” hard disks have a 12.5mm height to fit in 3

platters, making them too tall for laptop bays designed to take a 9.5mm hard

disk.

As a frequent use for large capacity HDDs

is for storage of bulky files such as photos and video, in this review we will

focus heavily on file handling performance, along with testing real world

performance using VirtualBox.

Toshiba Company Information

Toshiba is a well-known manufacturer of a

wide range of consumer electronics, including TVs, laptops, Ultrabooks and

set-top DVD & Blu-ray players. They also make a wide variety of consumer

and commercial equipment such as electric motors, photocopiers, LED lighting, imaging

products such as for MRI and so on.

Further information on Toshiba can be found

on their website.

Retail packaging

Unlike most retail products, this hard disk

was supplied in little other than an anti-static bag inside a heavily padded

cardboard box.

Product photos

Now, let’s take a look at the hard disk:

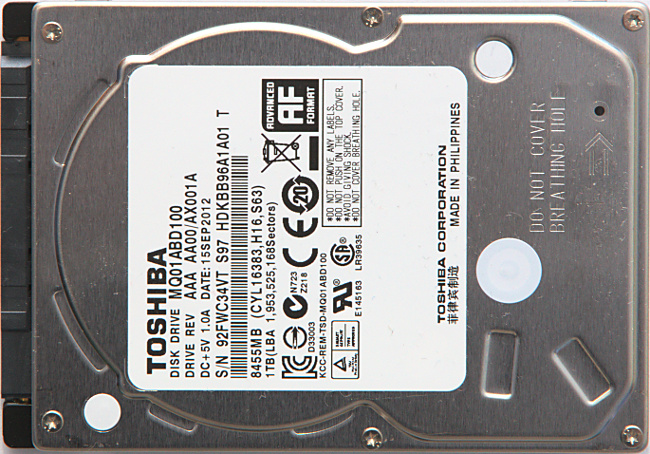

Front

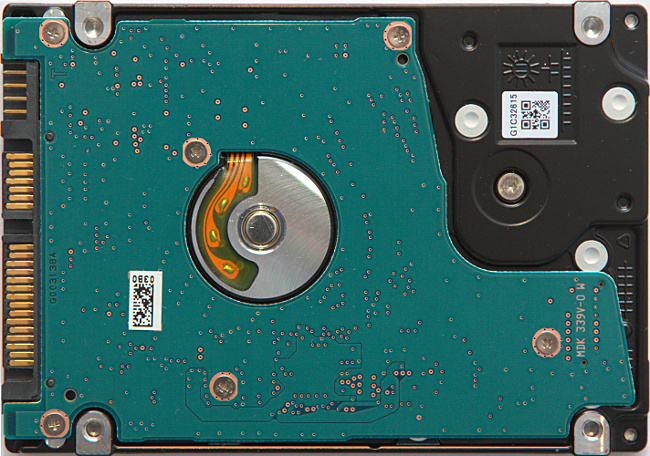

Reverse side

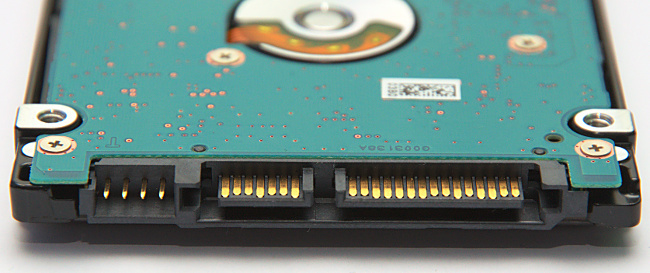

Close-up of auxiliary pins & SATA interface

Product Specifications

The following are the specifications, as

provided on the Toshiba website:

Features

- Model:

MQ01ABD100 - Formatted capacity: 1,000 GByte

- Form factor: 2.5 inch

- Interface type: Serial ATA

- Supported interface standards: ATA-8, Serial ATA 3.0

- Shock detection: Shock sensor circuit

- S.M.A.R.T.: The SMART command set is supported.

Physical parameters

- Number of platters: 2

- Number of heads: 4

- Bytes/sector (Host): 512

- Bytes/sector (Disk): 4096 kByte

Access times

- Average seek time: 12 ms

- Maximum seek time: 22 ms

- Track-to-track seek time: 2 ms

Transfer rates

- SATA (Host): max. 3.0 Gbit/s

Rotational speed

- Rotational speed: 5,400 rpm

Buffer

- Buffer size: 8,192 kByte

Reliability specifications

- MTTF:

600,000 Hours - Seek error rate: 1 error per 10^6 seeks

- Non-recoverable error rate: 1 error per 10^14 seeks

Power consumption

- Start (Maximum): 4.5 W

- Seek (Average): 1.85 W

- Read (Average): 1.5 W

- Write (Average): 1.5 W

- Low-power idle (Average): 0.55 W

- Sleep (Average): 0.15 W

- Stand-by (Average): 0.18 W

Power supply

- Supply voltage: +5 V (+/- 5 %)

Mechanical specifications

- Drive width: 69.85 mm

- Drive height: 9.5 mm

- Drive depth: 100 mm

- Drive weight: 0.117 kg

- Drive orientation: The drive can be installed in all axes (6 directions)

Temperature

- Operating:

From 5 °C to 55 °C - Non-operating: From -40 °C to 65 °C

Humidity

- Operating:

From 8 % to 90 % - Non-operating: From 8 % to 90 %

Vibration

- Operating (Maximum): 1 G, with 5 - 500 Hz

- Non-operating (Maximum): 5 G, with 15 - 500 Hz

Shock

- Operating (Maximum): 400 G, with 2 ms half sine wave

- Non-operating (Maximum): 900 G, with 1 ms half sine wave

- Shipping (Maximum): 0.7 m free drop with no unrecoverable error. Applied shocks in

each direction of the drive’s three mutually perpendicular axes, one axis

at a time (Packed in Toshiba’s original shipping package).

Altitude

- Operating:

From -300 m to 3,000 m - Non-operating: From -300 m to 12,000 m

Acoustic noise

- Idle mode (Disk spinning): Max. 23 dB

- Random seek: Max. 24 dB

Now let’s head to the next page where we

will look at our test PC and testing procedures…