|

|

Review: Reviewed Provided Firmware

|

Introduction

Welcome to Myce’s review of the Toshiba HK4E series of SATA

Enterprise SSDs.

This review marks an exciting step forward for Myce as it is

the first review in which we have used our new OakGate Test Platform. The new

OakGate test bench is a state of the art platform which provides us with the

ability to test all types of SSDs, including SATA, SAS 2, SAS 3, and PCIe/NVMe,

in one integrated solution. We plan to publish a review/profile of our new OakGate

Test Platform in the near future.

The Toshiba HK4E is available in capacities of 200, 400, 800,

and 1600GB. The subject of this review is the 1.6TB model – the THNSN81Q60CSE.

In this review, I draw readers’ attention to the

Myce/OakGate 4K Latency Write Test, where one can see a fascinating insight in

to the behaviour and impact of the Toshiba THNSN81Q60CSE’s firmware – please

see Page 6.

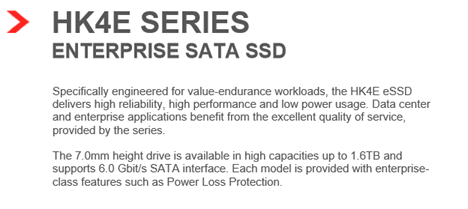

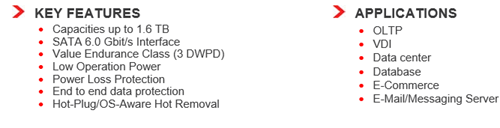

Market Positioning and Specification

Market Positioning

This is how Toshiba positions the HK4E Series –

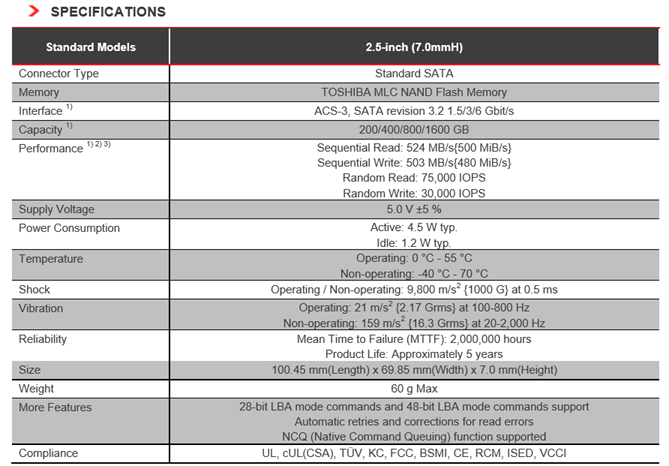

Specification

Here is Toshiba’s specification for the HK4E Series -

Here is a picture of the Toshiba THNSN81Q60CSE that I tested

-

The Toshiba HK4E Series uses Toshiba’s 15nm MLC NAND and a

proprietary Toshiba controller.

Now let's head to the next page, to look at Myce’s

Enterprise Testing Methodology.....

NEW PAGE

NEW PAGE NEW PAGE NEW PAGE

Testing Methodology

Please click

here

to view or download a detailed introduction to Myce’s Enterprise Class Solid

State Storage (‘SSS’) Testing Methodology as a PDF.

Put briefly:

All testing is performed on a state of the art OakGate

Technology test bench.

We perform two sets of Performance Tests:

1.

A full set of the Storage Network Industry Association’s (‘SNIA’) tests

with mandatory parameters, as specified in their Solid State Storage

Performance Test Specification Enterprise V1.0.

2.

A set of tests, known as the ‘Myce/OakGate Full Characterisation Test

Set’, that provides readers with a fuller characterisation of the solution.

We also review other important factors such as Data

Reliability and Failover features.

Before we move on, let’s remind ourselves of some basics –

When reviewing the performance of an SSS solution there are

three basic metrics that we look at:

1.

IOPS – the number of Input/Output Operations per Second

2.

Bandwidth – the number of bytes transferred per second (usually measured

in Megabytes per second, ‘MB/s’)

3.

Latency – the amount of time each IO request will take to complete

(usually, in the context of SSS solutions, measured in Microseconds, which are

millionths of a second).

It is true to say that IOPS and Bandwidth had all been

growing rapidly before the advent of SSS solutions, but Latency can only be significantly

decreased by eliminating mechanical devices, and thus Latency is the single

most important aspect that SSS solutions deliver to enhance performance.

Latency in a technical environment is synonymous with delay.

In the context of an SSS solution it is the amount of time between an IO

request being made, and when the request is serviced.

Bandwidth, also commonly referred to as ‘Throughput’, is the

amount of data that can be transferred from a storage device to a host, in a

given amount of time. In the context of SSS solutions it is typically measured

in Megabytes per second (MB/s).

A great enterprise SSS solution offers an effective balance

of all three metrics. High IOPS and Bandwidth is simply not enough if Latency

(the delay in an IO operation) is too high. As we will see in the test results

presented below, as Latency increases IOPS will inevitably decrease.

Queue Depth is the average amount of IO requests

outstanding. If you are running an application and the Average Queue Depth is

one or higher and CPU utilisation is low, then the application’s performance is

most probably suffering from a ‘Storage Bottleneck’.

Another important factor to remember is that SSS performance

is influenced by previous workloads, not just the current workload, and

especially by what has previously been written to the drive. As specified in

the SNIA SSS PTS the goal of all good Enterprise level testing is to provide

consistent circumstances, so that results can be compared fairly across

different SSS solutions – it is for this reason that all of our tests start

with a purge of the drive, so that it starts in a ‘Fresh Out of the Box’ (FOB)

state. Most tests then have a pre-conditioning phase where the drive is put

into a ‘Steady State’ before the test phase begins. Put briefly, a ‘Steady

State’ is achieved when the performance of the drive no longer varies over time

and settles into a consistent level of performance for the workload in hand. You

can find a detailed explanation of ‘Steady State’ and how it is determined in

the SNIA tests in our Enterprise Testing Methodology paper, which can be viewed

or downloaded as a PDF by clicking here.

For interest, here are some

generally accepted assumptions that differentiate the use and therefore the

approach to testing Enterprise/Server and Consumer/Client SSS solutions:

Enterprise/Server SSS

assumptions:

1.

The drive is always full

2.

The drive is being accessed 100% of the time (i.e. the drive gets no

idle time)

3.

Failure is catastrophic for many users

4.

The Enterprise market chooses SSS solutions based on their performance

in steady state, and that steady state, full, and worst case are not the same

thing

Consumer/Client SSS

assumptions:

1.

The drive typically has less than 50% of its user space occupied

2.

The drive is accessed around 8 hours per day, 5 days per week, and

typically data is written far less frequently

3.

Failure is catastrophic for a single user

4.

The consumer/client market generally chooses SSS solutions based on

their performance in the FOB state

Now let's head to the next page, to look at the results

of our SNIA IOPS (Input/Output Operations per Second) Test.....